Inter-rater agreement Kappas. a.k.a. inter-rater reliability or… | by Amir Ziai | Towards Data Science

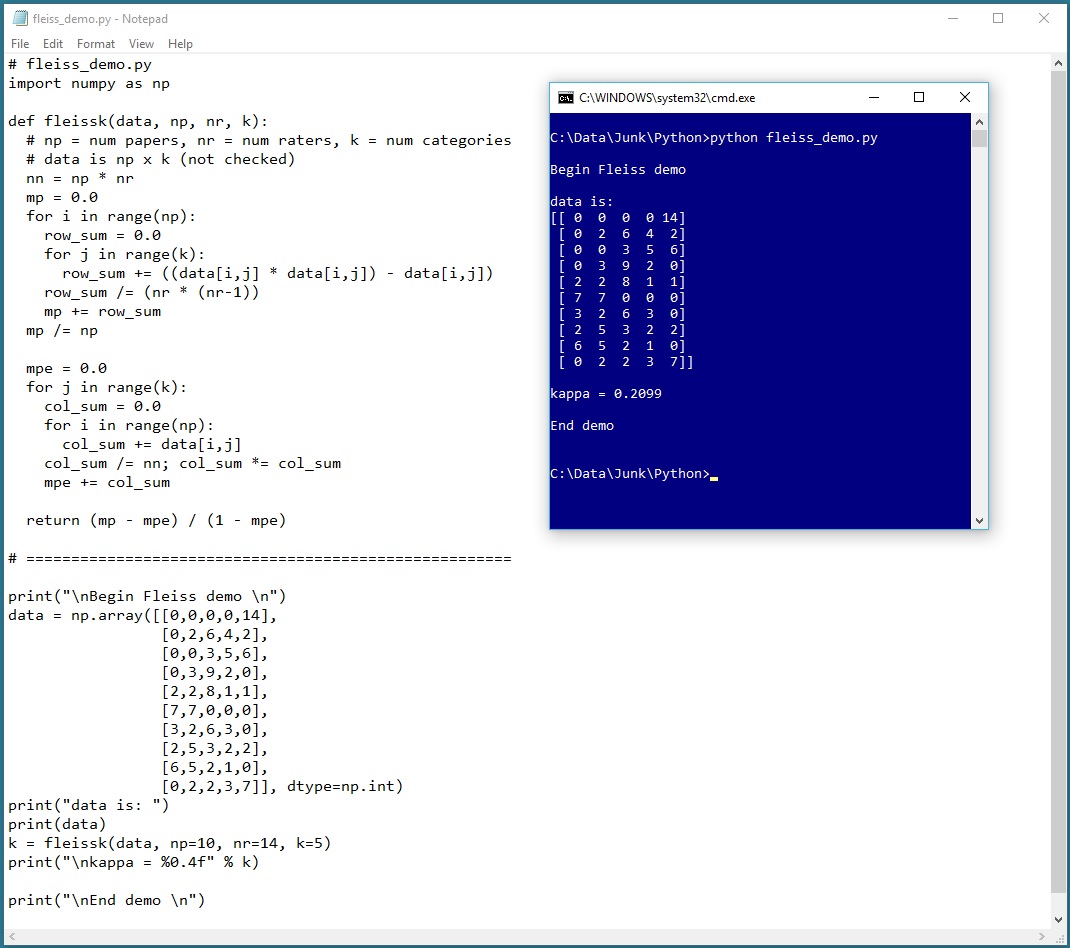

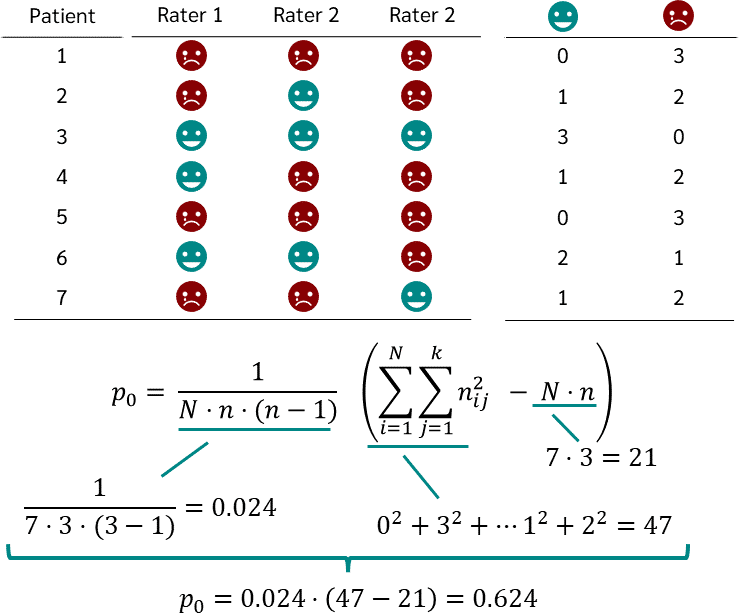

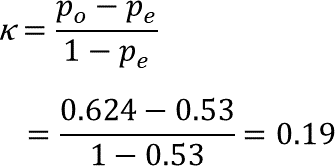

Example of Fleiss Kappa index. All calculations are made easy with just... | Download Scientific Diagram

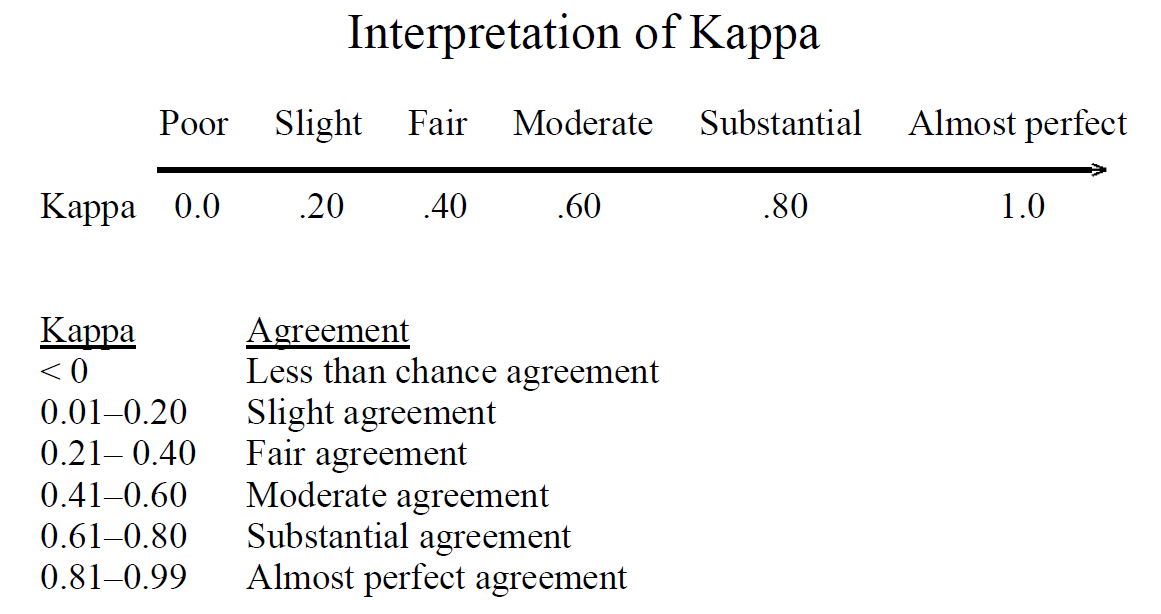

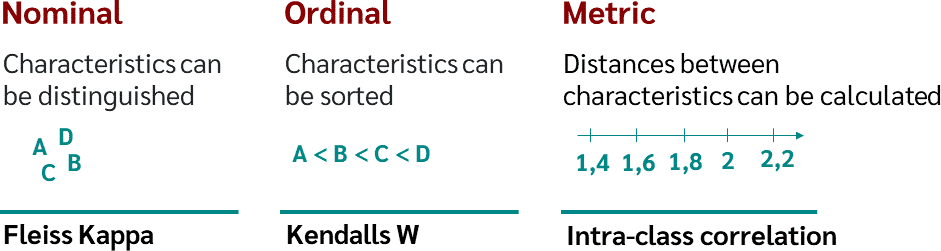

Using appropriate Kappa statistic in evaluating inter-rater reliability. Short communication on “Groundwater vulnerability and contamination risk mapping of semi-arid Totko river basin, India using GIS-based DRASTIC model and AHP techniques ...

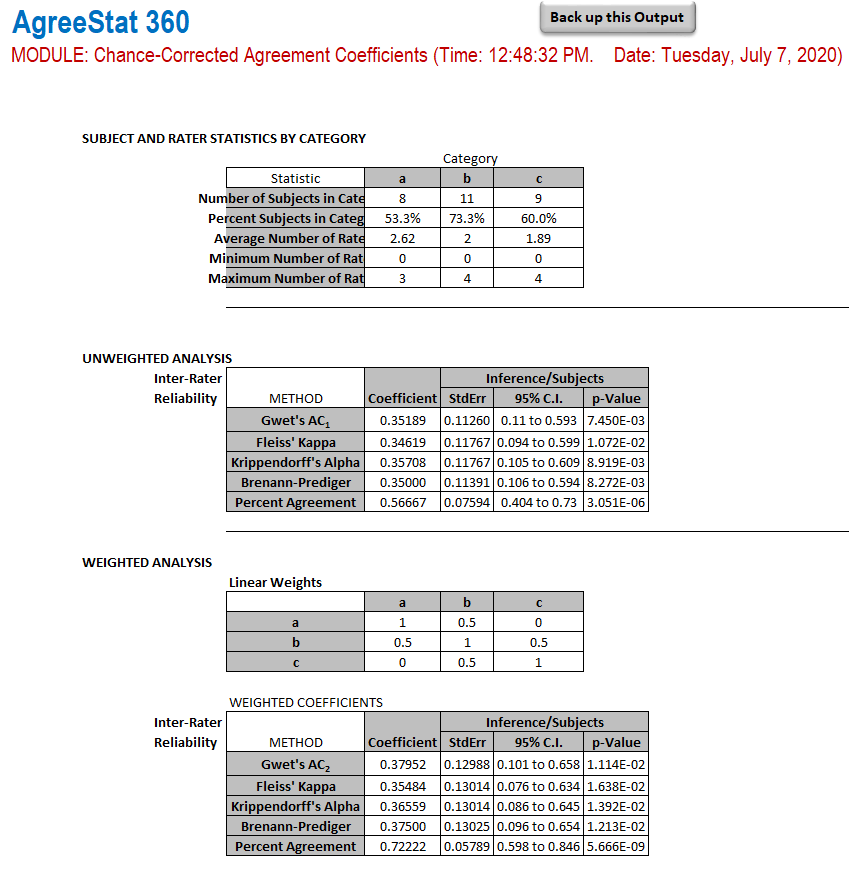

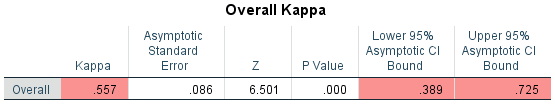

AgreeStat/360: computing weighted agreement coefficients (Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) with ratings in the form of a distribution of raters by subject and category

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/z4CiQPV0Mgw/hq720.jpg?sqp=-oaymwEhCK4FEIIDSFryq4qpAxMIARUAAAAAGAElAADIQj0AgKJD&rs=AOn4CLCJdRaZ4h71-nPW_BhBrIbFrIMWvg)